More than a week has passed since Apple announced iOS 14 and iPadOS 14 at its World Wide Developers Conference. The conference has ended and Apple’s operating systems have got their well-needed reboot.

Alongside iOS 14, Apple also announced updates to watchOS, macOS, and tvOS. To sum up the event, you could say that Apple gave users exactly what they want. And that really seems to be the best way to improve software; simply cater to user demand.

All those operating systems will hit your devices in September right after the flagship iPhone 12 event, where, Apple is also supposed to introduce the next Apple Watch.

iOS 14 comes with a ton of new features. While most are under-the-hood improvements, Apple also introduced some mainstream changes that straightaway affect how you use your iPhone and iPad. These include the ability to add widgets to the home screen, a streamlined and compact Siri experience, the ability to scribble to enter text using Apple Pencil on the iPad, on-device dictation, and all other kinds of machine learning smarts.

In other words, iOS 14 breaks some chains.

And while many features saw the spotlight at the event last Monday, there were some that didn’t and instead slipped under the radar. We get it, though. WWDC is usually bound by a time limit and there’s only so much that can earn screen time. So, only the most important features end up being announced on stage while the rest are quietly pushed to your devices as a part of the package.

But I think some of those features that got silently pushed with the first Developer Beta should’ve got more attention. They’re underrated but serve a bigger purpose of making your iPhone and iPad easier to interact with. More so than ever, these features that I’m about to mention create an impact on how you use your devices.

- iOS 14 Compatibility

- On-Device Dictation

- You can now hide the pages on your home screen.

- Ability to scrub home screens using page dots

- The Files app now supports APFS encryption

- Back Tap

- FaceTime will now detect a person using sign language and make them prominent in a group FaceTime call.

- Ability to share only your approximate location instead of your exact location

- Data detectors within handwritten text on iPadOS.

- Most Privacy Features

- Emoji search functionality in the native iOS keyboard

- Sound Recognition

- You can now perform web searches right from the native search bar

- Final Thoughts

iOS 14 Compatibility

The biggest and the most underrated iOS 14 feature is something you cannot directly interact with. It’s the fact that iOS 14 also supports all the devices that received support for iOS 13 last year.

Here are all the iPhones that iOS 14 supports.

And here are all the iPads that iPadOS 14 supports.

This isn’t always the case, as Apple usually drops an iPhone and an iPad into the oblivion. So, it shows the integrity of Apple’s hardware or the compatibility of its software or both. It’s fantastic.

On-Device Dictation

Dictation is a super-useful feature that I use every day. It’s just better when you don’t have to use your fingers. However, Apple’s making it even better with iOS 14 by isolating it on-device.

On-device dictation is kind of a big deal. According to a Google blog announcing the feature for its Gboard, on-device dictation technology is “compact enough to reside on a phone. This means no more network latency or spottiness— the new recognizer is always available, even when you are offline.

The model works at the character level, so that as you speak, it outputs words character-by-character, just as if someone was typing out what you say in real-time, and exactly as you’d expect from a keyboard dictation system.”

iOS 14 more or less adopts the same dictation tech. And it shows in the first Developer Beta. Dictation is much faster while using it on a keyboard. And it is also more secure from a privacy standpoint as all of the data you dictate stays on the device rather than being processed on the server.

As of now, though, iOS 14 only offers on-device dictation within the keyboard. Dictation everywhere else within iOS 14 gets processed on the server.

Moreover, it’ll be interesting to see how this technology evolves over time. Maybe Apple starts offering on-device dictation universally through iOS?

You can now hide the pages on your home screen.

iOS 14 now allows you to hide home screen pages. It was announced on stage, but still, it’s quite an underrated feature. Why?

Because people don’t really know what they can do with it so far. The Verge’s Deiter Bohn suggests you could “have a page (or three) set up for work, but when it’s time for the weekend you could uncheck them and hide all those apps in exchange for a weekend page.”

I don’t think that’s, well, possible. People have a certain set of apps that they use despite the day of the week or whatever they’re doing. So, unless Apple allows duplicating apps across pages, that’s a pipe dream.

What you can do, instead, along the lines of what Dieter said, is put all the apps that you use only at work on one page and hide it when you’re not working. Another way you can reap the benefits from hiding pages on your home screen is that you can stuff all the apps that were previously in your “Extras” folder onto a page and hide it.

There are other ways as well this feature may help, but any suggestions that further come to my mind are slightly off in logic for me to disclose them here. And because of this level of probable customizability that the feature offers, it’s quite underrated at the moment.

However, I am excited to see people creatively exploit this in the future when iOS 14 releases en masse.

Ability to scrub home screens using page dots

This isn’t a revolutionary feature by any measure. But it does fend off much of the resentment people have for the home screen on iOS 13.

On versions prior to iOS 14, it is difficult to directly switch to the last page (or any page in the middle) on the home screen. You can tap on the tiny dots corresponding to the page you want to jump to but they’re tiny, as in insufficiently tiny.

Suffice to say, they’re not really made for tapping using our thick human fingers.

iOS 14 fixes that. While the old method of tapping on the dots to travel to a particular page still exists, the home screen now also features page dots that are highlighted with a backdrop. You can tap and hold on them and simply swipe in either direction to quickly summon the page you want.

It’s underrated because it makes navigating through home screen pages extremely quick and easy, yet it hasn’t garnered much attention online. That doesn’t seem as fruitful in theory as it does in practice. Trust me, but, it’ll save you a ton of time and frustration. And many will appreciate this going forward.

The Files app now supports APFS encryption

iOS 14 brings APFS encryption support to the Files app. It’s great because there is a multitude of users out there that own an APFS-supported solid-state or a flash drive.

Why is this important? Because APFS is Apple’s proprietary file system that is much better than traditional HFS+ file systems. And bringing APFS encryption support for the native Files app means you can reap its benefits right on your iPad — which is the device people are more likely to connect their flash drives.

APFS encryption support for the Files app is underrated due to the fact that it’s powerful. It’s a file system that can even transfer files in virtually no time. Moreover, it can index a lot of data at once. And now, you can unlock encrypted external drives right from your iPad and iPhone. To read in-depth about APFS, check out this BlackBlaze article.

Back Tap

“Back Tap” is a new accessibility feature in iOS 14 that feels like a gimmick but is valuable if you use it correctly.

It essentially lets you tap on the back of your iPhone either twice or thrice to fire up different functions. When I double-tap on the back of my iPhone, it takes a screenshot. That’s easier than struggling to press two buttons at once.

You can set it to do different things. One of the more mischievous things you can do is set a shortcut to bring up Google Assistant on a double-tap. Using Back Tap, it feels like you have a more close-to-native Google Assistant experience on an iPhone than ever.

There are particularly two caveats with the Back Tap feature. The first is that it seems to be only available on iPhones with notches. Even the 2020 iPhone SE is deprived of Back Tap. Maybe this is because of hardware limitations since the feature probably puts the accelerometer and the gyroscope to detect that you’re tapping on the back. And for some reason, iPhones with an older form factor don’t have it.

The other catch is that Back Tap is falsely spontaneous. It easily activates by accident. There have been countless instances where I place my iPhone on a surface and it detects it as a double-tap. To counter this error, you can instead use triple-tap but it doesn’t guarantee that the possibility of accidental taps will vanish. Although, I hope Apple perfects it through beta releases.

With support for running shortcuts too, Back Tap has great potential. You could run a “morning rundown” shortcut without even having to wake up the screen, making your mornings more productive and less distracting, because, well, you’re potentially being saved from the smartphone rabbit hole.

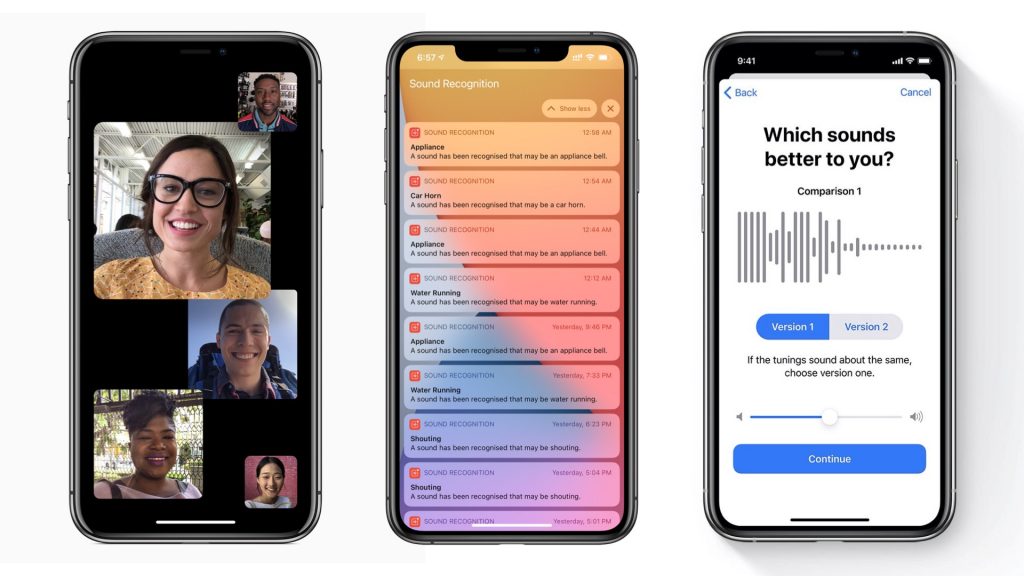

FaceTime will now detect a person using sign language and make them prominent in a group FaceTime call.

iOS 14 puts a lot of emphasis on accessibility. That was expected of iOS. What wasn’t expected is the fact that it does so very well.

One of the new accessibility features comes within FaceTime. People with hearing disabilities that use sign language to communicate will appear prominent while signing during FaceTime group video calls.

For those who are oblivious, FaceTime introduced a feature earlier that makes tiles of people actively speaking in a group video call prominent. This means that the tile of the person currently speaking literally grows in size as opposed to other people who aren’t speaking.

Now, it’s especially useful since it supports people with hearing disabilities using sign language. And truth be told, it’s an awfully underrated feature just like most accessibility features are.

Ability to share only your approximate location instead of your exact location

Privacy is increasingly becoming important today. And more so than ever, location services living in your phone are responsible for a lot of data that’s being shared about you online.

Apple puts considerable emphasis on privacy on iOS as well as iPadOS. iOS 14 accentuates that. You can now choose to share your location approximately rather than precisely. This is helpful especially when you need to access most maps services. For instance, if you need to find cafes around you, no maps service requires your precise location to help you do that and you should enable approximate location instead.

Sharing approximate location helps in other ways as well. Most advertisers use your precise location to track you and serve ads. Say, for instance, you’re in a physical Armani retail store. Sharing your precise location at the time means advertisers know what exactly your intent was while you were at the store and you might soon start seeing Armani and other clothing ads online.

This can feel overwhelming if it keeps happening frequently. Soon, there’s a tipping point after which things start to seem obtrusive. By contrast, sharing approximate location doesn’t let out specific information to advertisers. Subsequently, it bars advertisers from tracking you to a considerable extent and serving obtrusive ads.

The impact this feature has/will have on your online life is noteworthy. You can choose to share either approximate or precise location on a per-app basis. Despite the convenience that this privacy feature brings to the table, it’s underrated.

Data detectors within handwritten text on iPadOS.

iPadOS 14 now automatically detects data from handwritten text. This feature doesn’t seem as lucrative now, but it’ll show with daily usage when iPadOS 14 finally comes out in September.

The data that can be converted and saved into native UI includes numbers, addresses, and more. There are a lot of moments when you need to instantly jot down important information like numbers and addresses on your iPad while you’re doing other stuff using the Apple Pencil.

Now, you won’t need to manually copy that information. You can simply tap on the handwritten text and your iPad will serve options to interact with that information. These include tapping on a number to instantly save it as a contact or tapping a written address to view it within the Maps app.

Now I know, you might be skeptical about this one and I give it to you. But trust me, this feature has quite the potential.

Most Privacy Features

I don’t need to stress this enough. You know it already. Privacy is paramount to the iOS “experience.”

iOS 14 evidently ships with a ton of privacy-oriented features that masks you from the rest of the world. For one, it involves a new WiFi filtering feature that hides your MAC address if you choose to do so within the WiFi settings. This saves you from a lot of network snooping and potentially will help you bypass WiFi limits at airports.

Another great blow for privacy poopers is limited photo sharing. You can let apps gain access to only a limited number of photos that you get to select from your Photo Library instead of giving away access to everything there.

Bad actors behind apps can no longer see your entire camera roll thanks to the selective photo access permissions. iOS 14 prompts you with options to either share the entire library or choose the photos you want to provide access to when an app demands it.

Then there’s also a new kind of notification in iOS 14 that pops down when an app pulls data from the clipboard. TikTok is one of the many apps that has now been under scrutiny for days owing to this feature. People running the iOS 14 Beta are seeing clipboard notifications every keystroke within the app. And there’s also a bit of controversy generating on the subject with TikTok saying it’s an anti-spam feature and that they’ll be finally removing it.

But it’s good to know that people are finally informed about such practices as they happen.

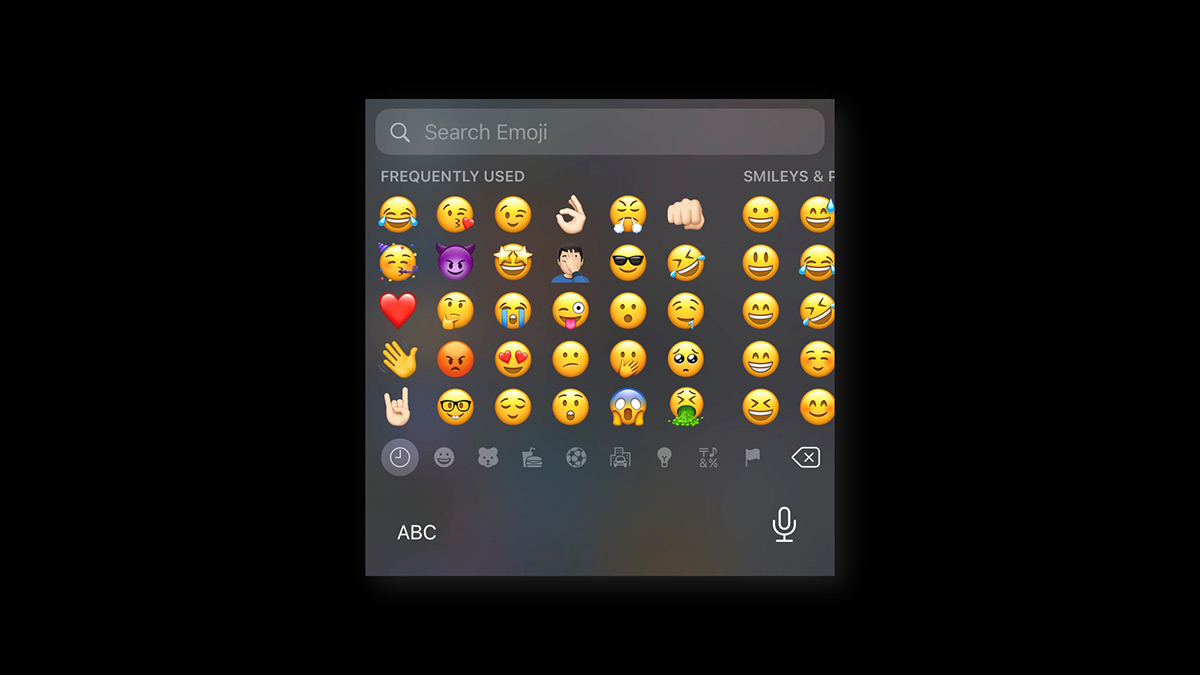

Emoji search functionality in the native iOS keyboard

The native iOS keyboard is as great as it is. It’s versatile, quick, and most importantly secure.

For some reason, I can’t keep my hands off of it. No matter which third-party keyboard I try out, I always seem to come back to the stock iOS keyboard. Now, owing to iOS 14, I’ve got one more reason to stick to it.

If you read my Gboard vs iOS Keyboard piece, you’ll realize that the reason I prefer using Gboard is the fact that it offers comparatively more features. And one of those features is the emoji search within the keyboard itself. Now it’s coming to the native iOS keyboard as well.

We use emojis at a vast rate daily. They help us emote our feelings and communicate better. But up until now, the biggest inconvenience while trying to use emoji was the fact that since there are a lot of them, they’re relatively difficult to quickly find.

This may not be a big deal for some. But for a lot of people, it’ll make using the keyboard much less vexatious. Moreover, and most importantly, it’ll save some time along the way.

Sound Recognition

iOS 14 comes with yet another accessibility feature that listens for certain sounds in the surroundings and notifies you when your iPhone identifies the sound.

Sound Recognition can detect sounds like fire, siren, smoke, cat, dog, household appliances, and even a baby crying. This is useful for people with hearing disabilities since the iPhone can now notify them of the sounds they cannot probably hear.

It’s an underrated feature considering the precision it offers. Almost every time someone started shouting around me, my iPhone picked it up. What’s more interesting is the fact that people that do not have any hearing disabilities can use it as well, albeit in unusual ways.

For example, you can potentially use it as a baby monitor. Set the iPhone in the same room as your baby and when it detects crying, it’ll send a notification that will reflect on your Apple Watch.

You can now perform web searches right from the native search bar

Previous to iOS 14, you could swipe down on your home screen and access a search bar that Apple calls “Spotlight Search” to quickly find stuff within the operating system including apps, contacts, files, etc. It should’ve been able to do more.

With iOS 14, it does. First and foremost, before I get to the crux of this point, I wanna touch on the peripheral features that have improved within Spotlight Search on iOS 14. There’s a new backdrop behind apps that match search terms. If the search bar is sure that you are looking for a certain app, it gives you the option to straightaway jump inside that app without having to tap twice.

The search results are also more detailed and exact. Along its lines, search results now also include rich data from the web. Simply type in “How tall is Obama?” into the search bar and iOS now provides you a native UI search result pulled straight from Wikipedia.

More or less, this feature feels like it brings Google’s smart snippets to your iPhone’s Spotlight Search bar. And it’s a really convenient way to find information from the web.

Moreover, Siri has also gained similar functionality as it got smarter.

Final Thoughts

iOS or iPadOS still is an operating system that’s far from perfect. There’s room for improvement here and there. But as mobile operating systems are maturing with every release, there’s very little innovation left to do.

Windows is the best example of a mature operating system. So much so that Microsoft has entirely ceased the development of new versions. Owing to that, Windows 10 will remain Windows 10, albeit it still receives minor improvements time and again.

Mobile operating systems are on a similar path. Unless there’s a revolution in how we use our devices, the software is going to soon become stagnant. And it seems like a revolution is underway with Apple bringing the Mac, the iPad, and the iPhone software closer to each other than ever.

While these features in iOS 14 and iPadOS 14 are underrated, they rightfully serve a much bigger purpose — inching closer to a perfect mobile operating system. But does perfection exist in reality?